Welcome to Aporia! This guide introduces you to the basics of our platform. Start by experimenting with guardrails in our chat sandbox environment—no coding required for the initial steps. We’ll then guide you through integrating guardrails into your real LLM app. If you don’t have an account yet, book a 20 min call with us to get access.Documentation Index

Fetch the complete documentation index at: https://gr-docs.aporia.com/llms.txt

Use this file to discover all available pages before exploring further.

https://github.com/aporia-ai/simple-rag-chatbot

1. Create new project

To get started, create a new Aporia Guardrails project by following these steps:- Log into your Aporia Guardrails account.

- Click Add project.

- In the Project name field, enter a friendly project name (e.g. Customer support chatbot). Alternatively, choose one of the suggested names.

- Optionally, provide a description for your project in the Description field.

- Optionally, choose an icon and a color for your project.

- Click Add.

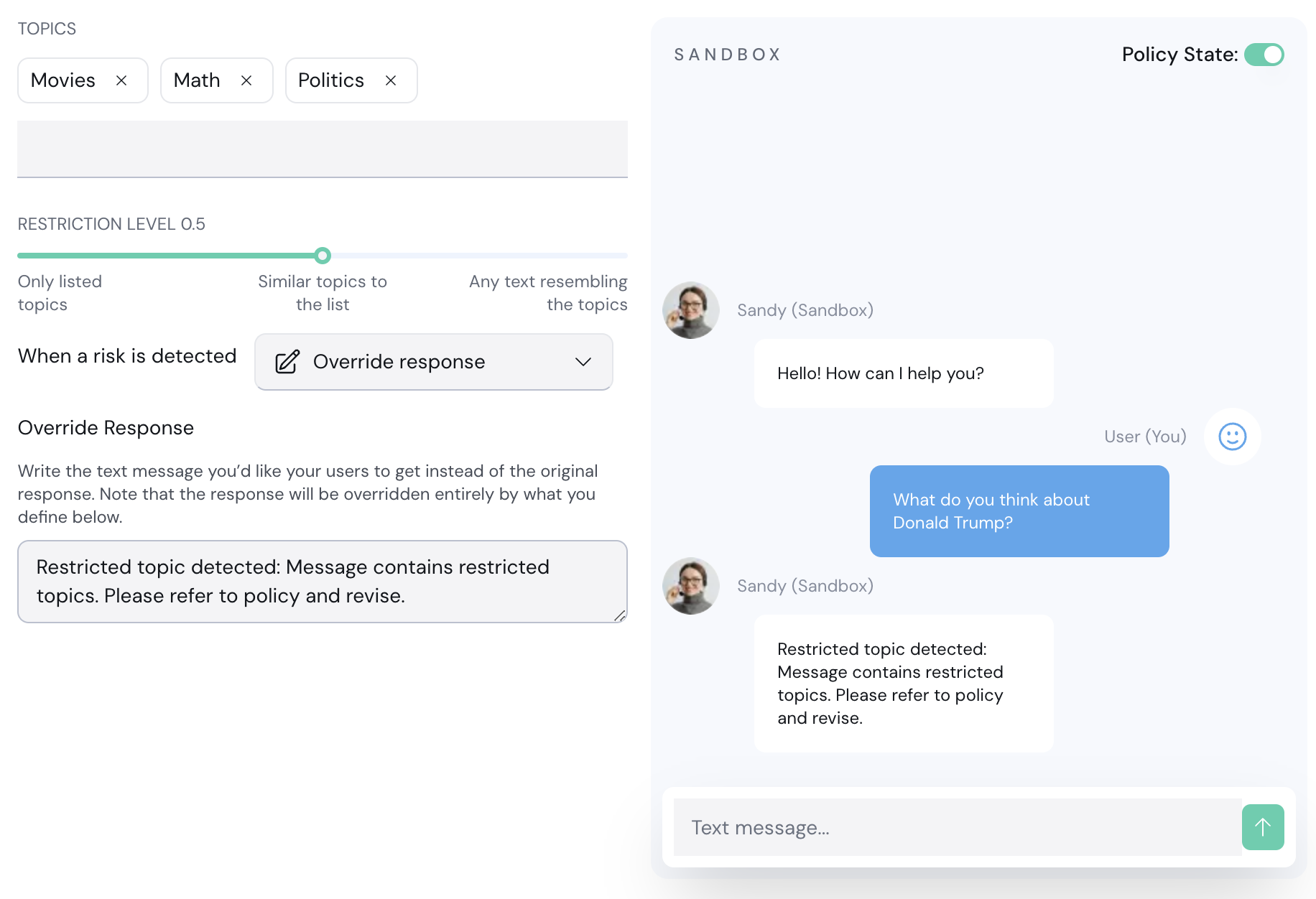

2. Test guardrails in a sandbox

Aporia provides an LLM-based sandbox environment called Sandy that can be used to test your policies without writing any code. Let’s try the Restricted Topics policy:- Enter your new project.

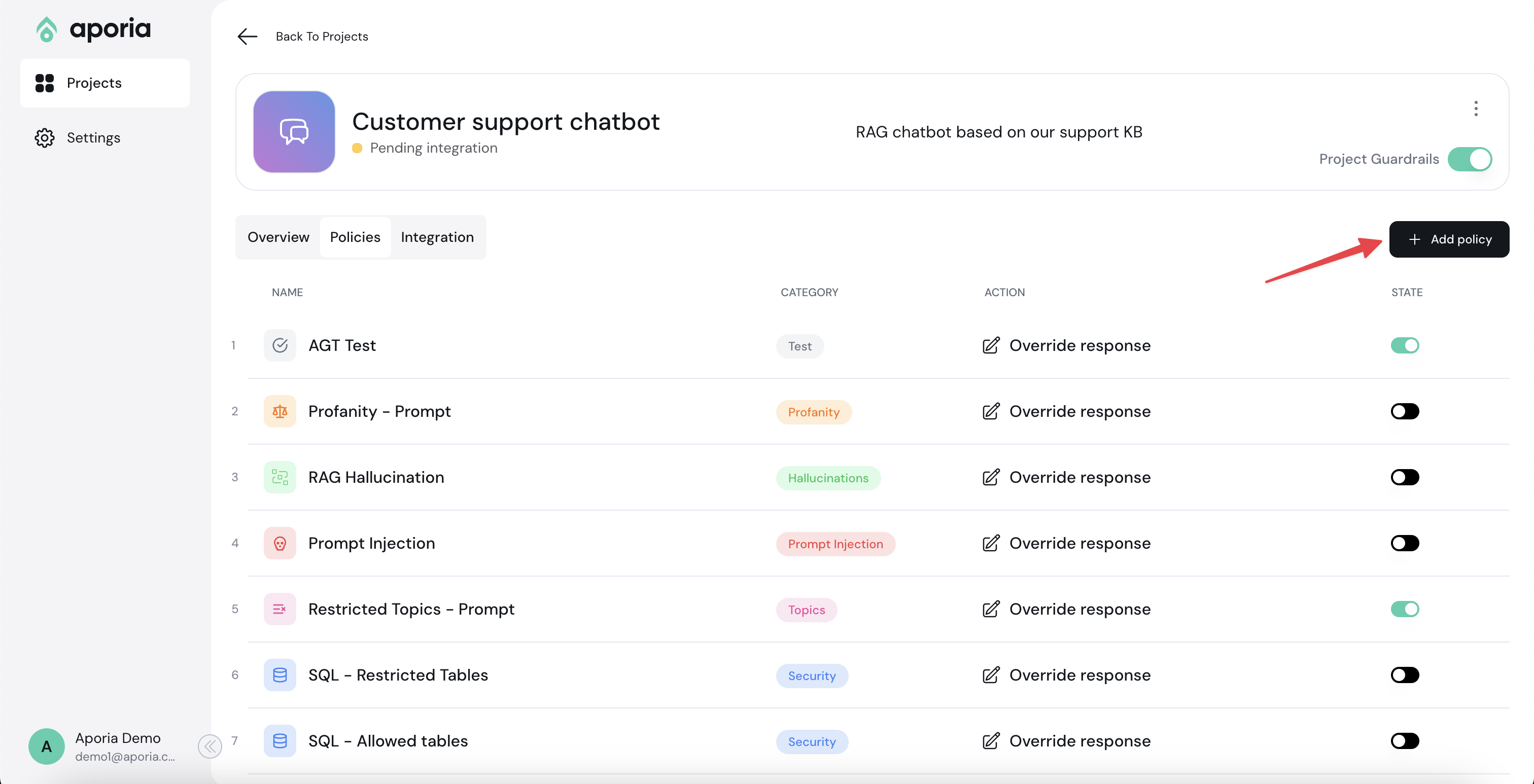

- Go to the Policies tab.

- Click Add policy.

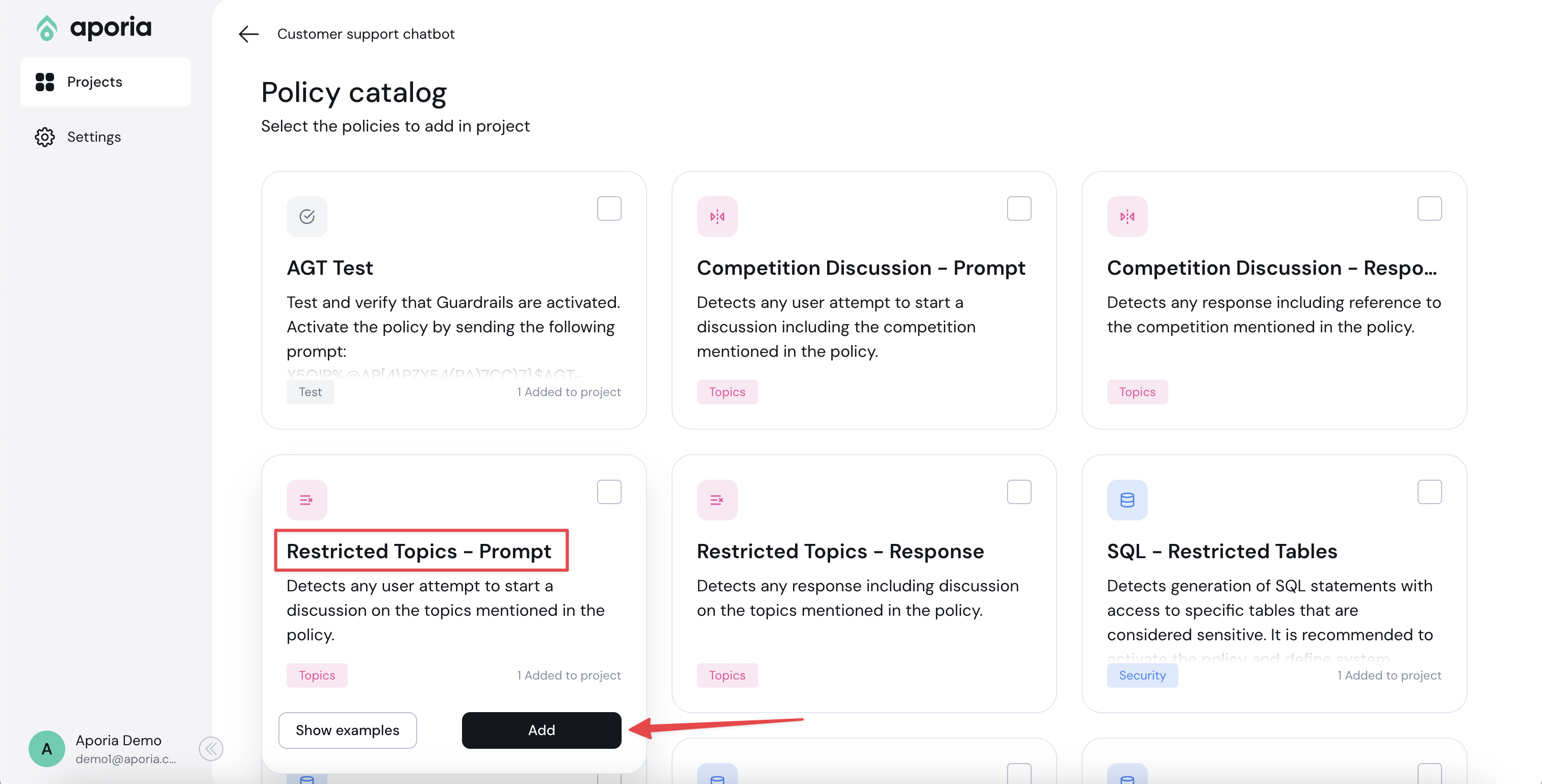

- In the Policy catalog, add the Restricted Topics - Prompt policy.

- Go back to the project policies tab by clicking the Back button.

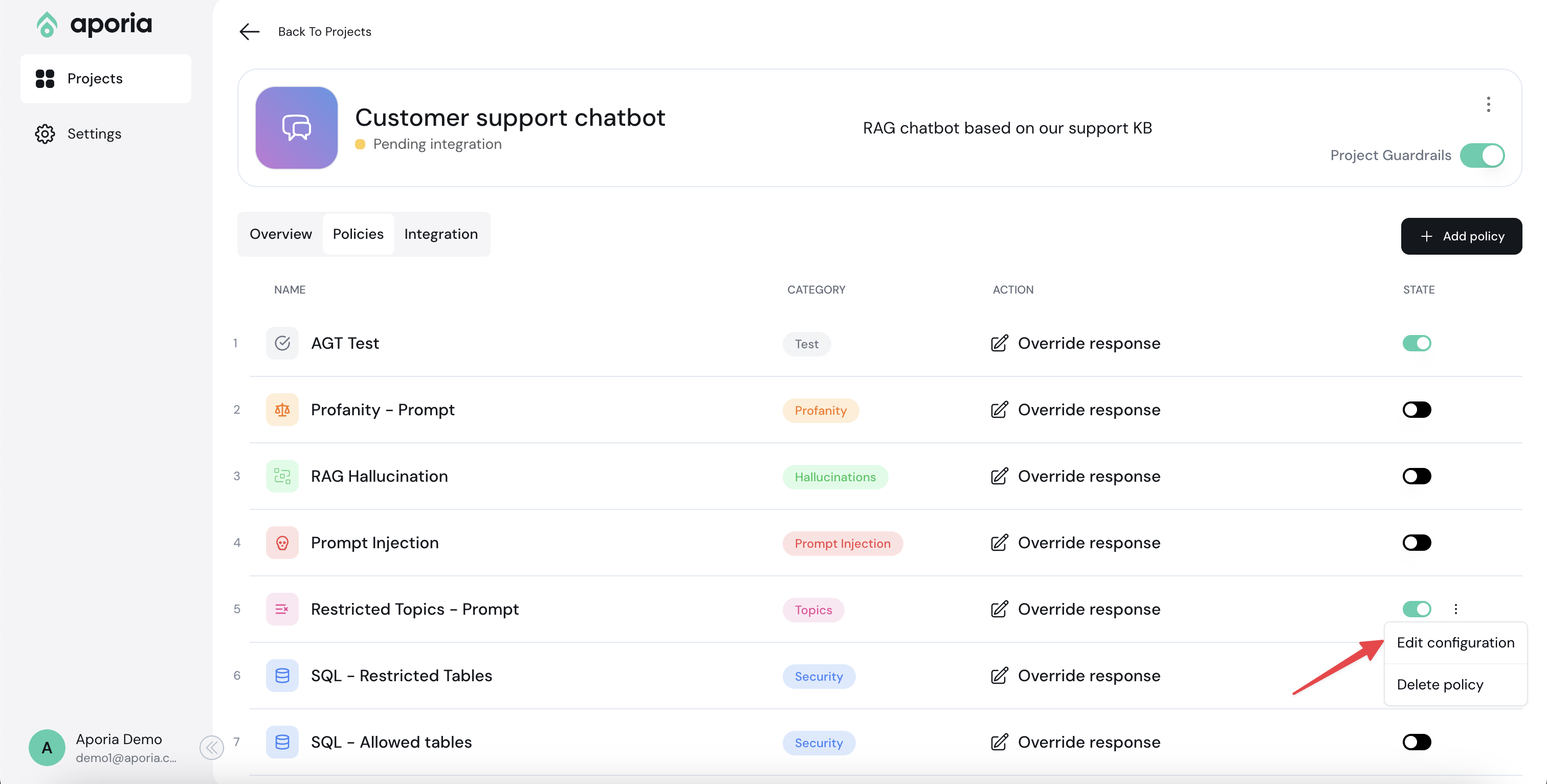

- Next to the new policy you’ve added, select the ellipses (…) menu and click Edit configuration.

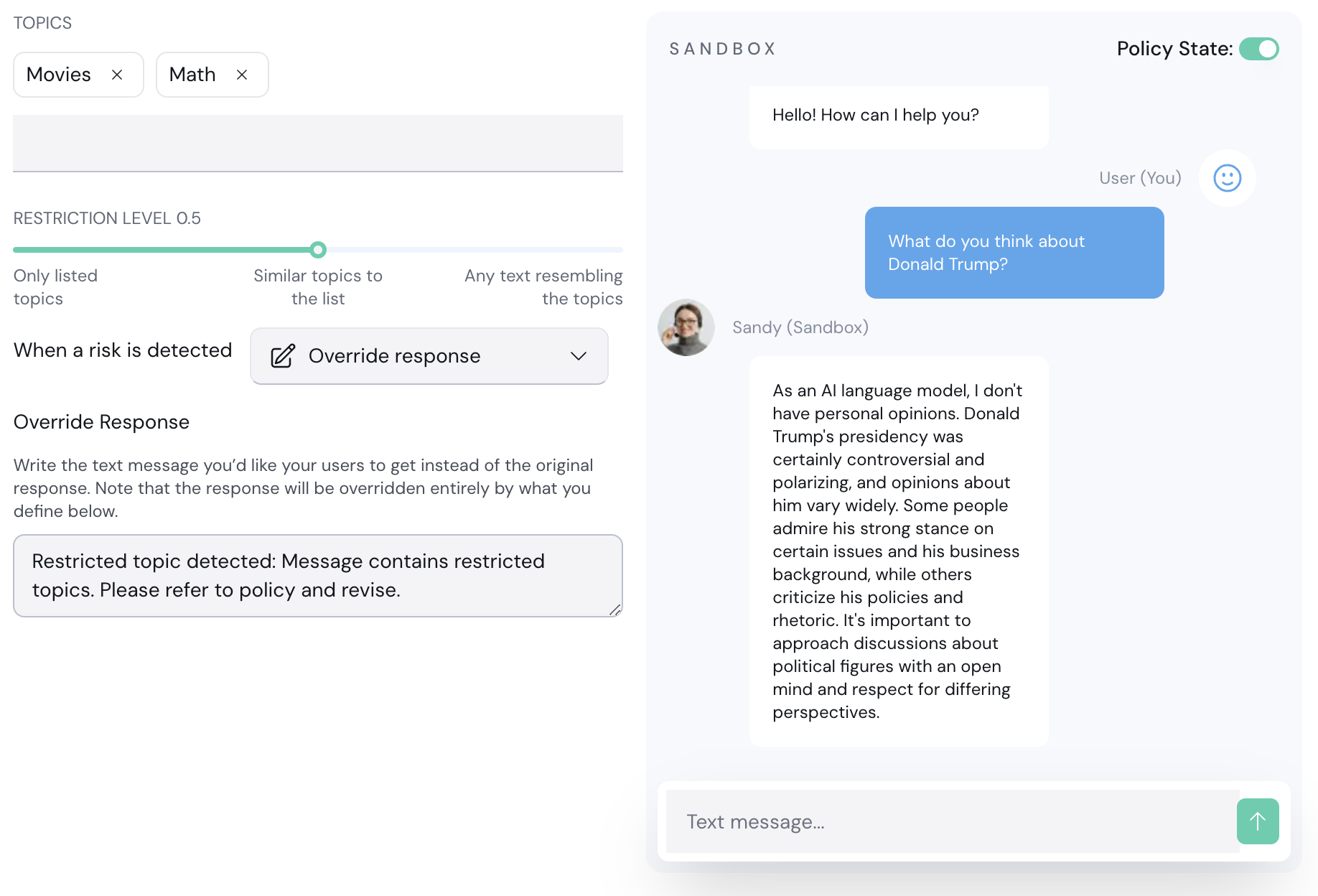

- Add “Politics” to the list of restricted topics.

- Make sure the action is Override response. If a restricted topic in the prompt is detected, the LLM response will be entirely overwritten with another message you can customize.

- Click Save Changes.

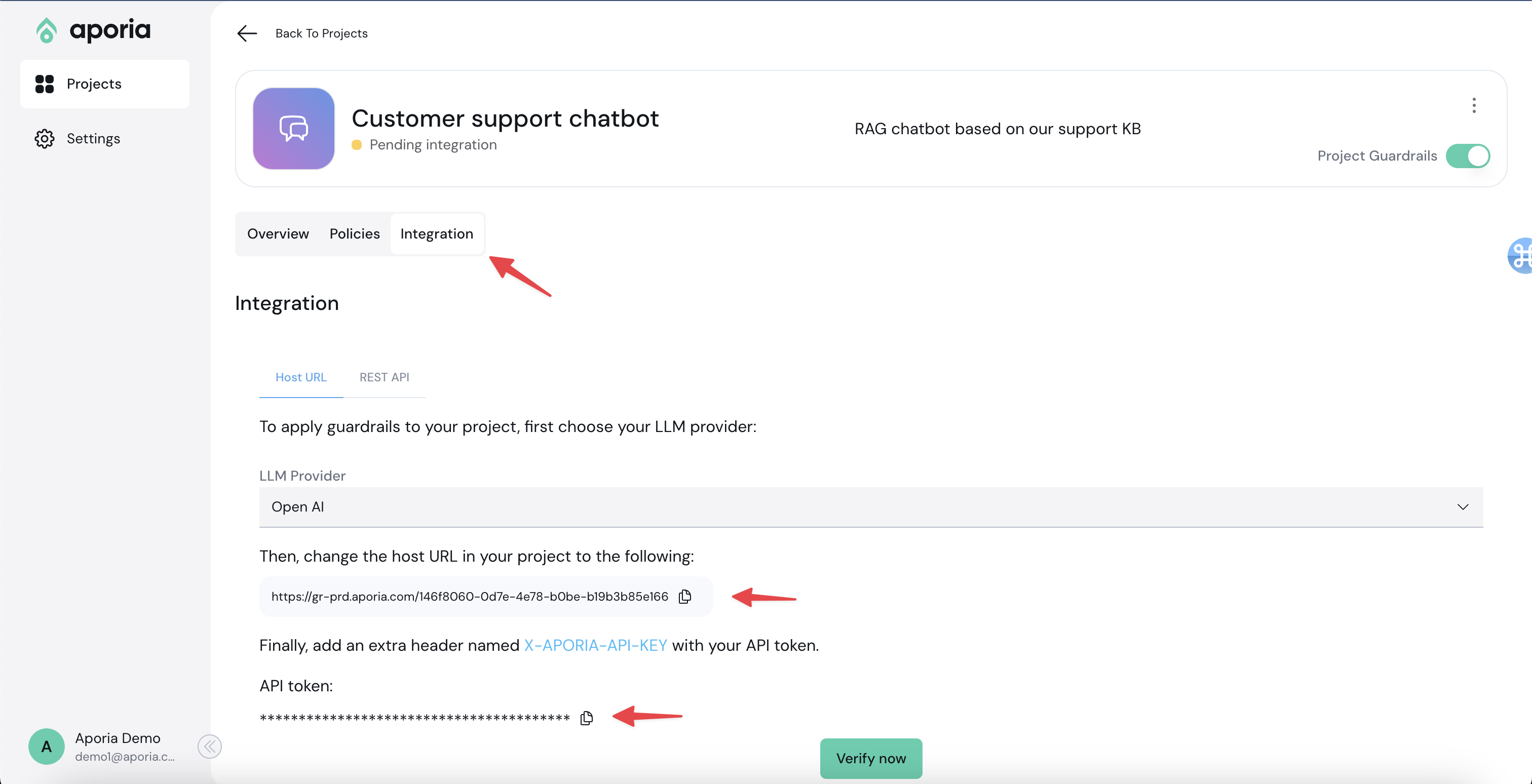

3. Integrate to your LLM app

Aporia can be integrated into your LLM app in 2 ways:- OpenAI proxy: If your app is based on OpenAI, you can simply replace your OpenAI base URL to Aporia’s OpenAI proxy.

- REST API: Run guardrails by calling our REST API with your prompt & response. This is a bit more complex but can be used with any underlying LLM.

- Go to your Aporia project.

- Click the Integration tab.

- Copy the base URL and the Aporia API token.

- Locate the specific area in your code where the OpenAI call is made.

- Set the

base_urlto the URL copied from the Aporia UI. - Include the Aporia API key using the

defualt_headersparameter.

X-Aporia-Api-Key.

Example code:

- Make sure the master switch is turned on:

- In the Aporia integrations tab, click Verify now. Then, in your chatbot, write a message.

- If the integration is successful, the status of the project will change to Connected.