Documentation Index

Fetch the complete documentation index at: https://gr-docs.aporia.com/llms.txt

Use this file to discover all available pages before exploring further.

Overview

In this method, Aporia acts as a proxy, forwarding your requests to OpenAI and simultaneously invoking guardrails. The returned response is either the original from OpenAI or a modified version enforced by Aporia’s policies. This integration supports real-time applications through streaming capabilities, making it particularly useful for chatbots.Prerequisites

To use this integration method, ensure you have:Integration Guide

Step 1: Gather Aporia’s Base URL and API Key

- Log into the Aporia dashboard.

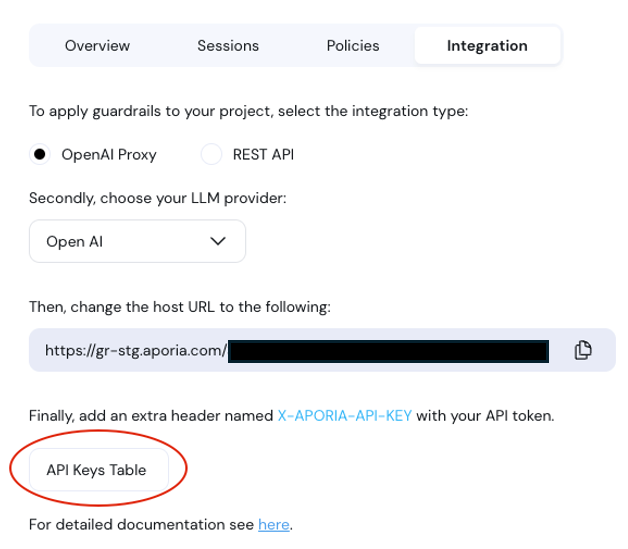

- Select your project and click on the Integration tab.

- Under Integration, ensure that Host URL is active.

- Copy the Host URL.

- Click on “API Keys Table” to navigate to your keys table.

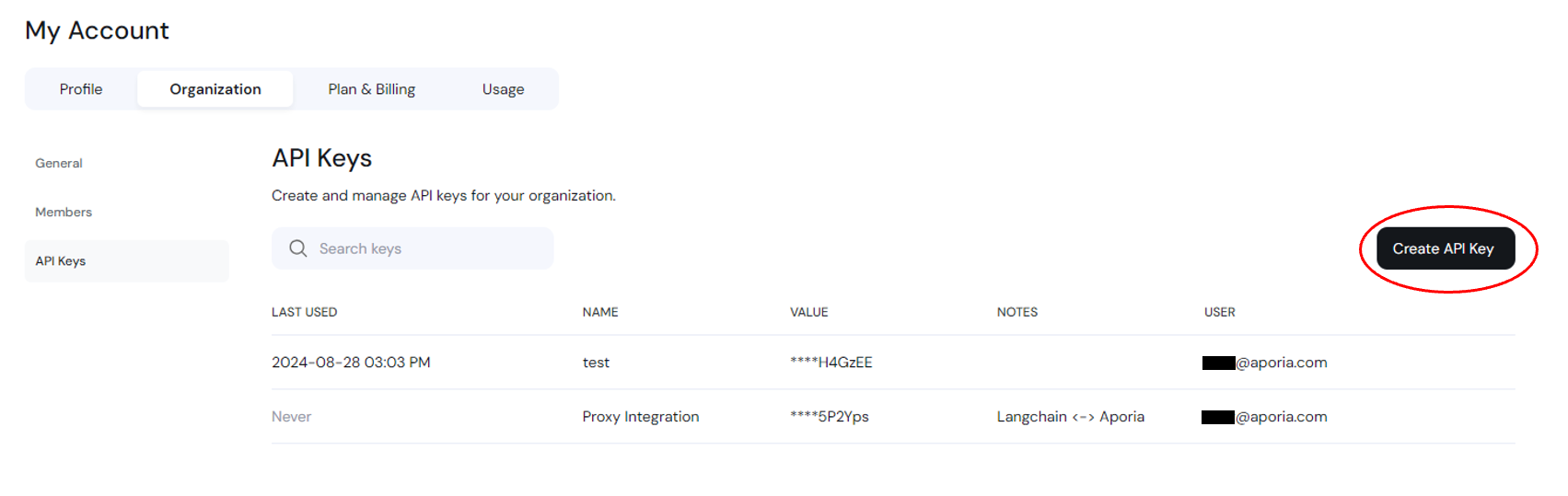

- Create a new API key and save it somewhere safe and accessible. If you lose this secret key, you’ll need to create a new one.

Step 2: Integrate into Your Code

- Locate the section in your codebase where you use the OpenAI’s API.

- Replace the existing

base_urlin your code with the URL copied from the Aporia dashboard. - Add the

X-APORIA-API-KEYheader to your HTTP requests using thedefault_headersparameter provided by OpenAI’s SDK.

Code Example

Here is a basic example of how to configure the OpenAI client to use Aporia’s OpenAI Proxy method:Azure OpenAI

To integrate Aporia with Azure OpenAI, use theX-AZURE-OPENAI-ENDPOINT header to specify your Azure OpenAI endpoint.